Videotestsrc pattern=2 ! 'video/x-raw, width=(int)1920, height=(int)1080, format=(string)UYVY, framerate=(fraction)30/1' ! queue ! st. Because if I replace all the v4l2src with videotestsrc, this heavy use of memcpy disappear (below). Seems the src element has a lot to do with this. We uses Toshiba TC358743 to capture video. My question is on what circumstance cudaMemcpyAsync will use memcpy so heavily? How to avoid this. Then I gdb it a bit to see the callstack, and it seems all the memcpy is from inside cudaMemcpyAsync. Then I use oprofile to see what happend, and found that memcpy takes most of the cpu time: CPU: ARM Cortex-A57, speed 2035.2 MHz (estimated)Ĭounted CPU_CYCLES events (Cycle) with a unit mask of 0x00 (No unit mask) count 100000

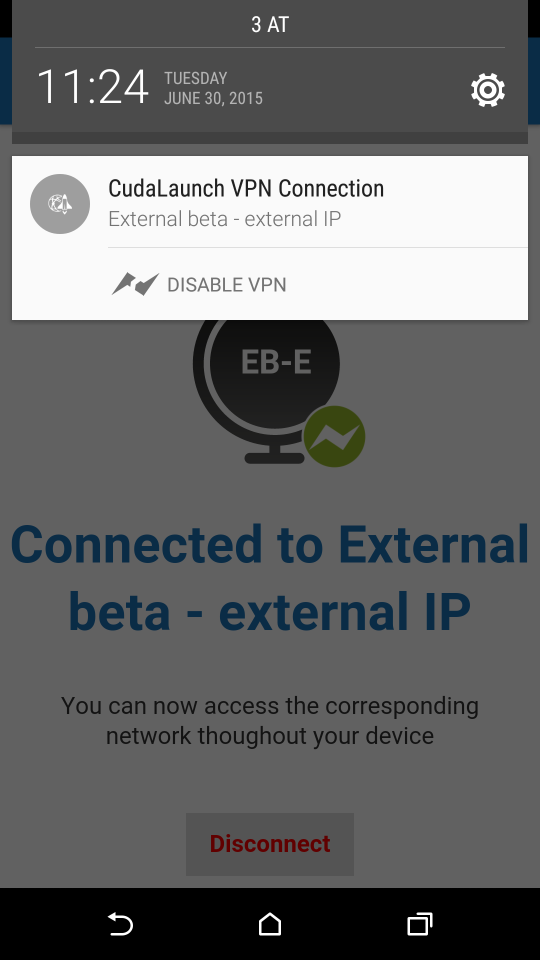

The problem here is that cudaMemcpyAsync has very low throughput, 400~700MB/s (from nvprof and nv visual profiler). It uses cudaMemcpyAsync and streams for concurrency. Videostitcher is an element that uses cuda to stitch multiple inputs. Note, if you are used to nvprof, try to copy/paste your nvprof cmd via: nsys nvprof Īnd Nsight Systems would try to translate the legacy nvprof command.So I’m working on a live video stitching gstreamer pipeline on TX2, and having performance issue. (allows you to hover over a call and get the backtrace) You can also specify thresholds in ns which defines a threshold which the kernel must execute before backtraces are collected. -cudabacktrace=true: When tracing CUDA APIs, enable the collection of a backtrace when a CUDA API is invoked.-capture-range=cudaProfilerApi and -stop-on-range-end=true: profiling will start only when cudaProfilerStart API is invoked / Stop profiling when the capture range ends.-t cuda,nvtx,osrt,cudnn,cublas: selects the APIs to be traced.The arguments can be found in the linked CLI docs. (Thanks to Michael Carilli to create this cmd a while ago ) The CLI options for nsys profile can be found here and my “standard” command as well as the one used to create the profile for this example is: nsys profile -w true -t cuda,nvtx,osrt,cudnn,cublas -s cpu -capture-range=cudaProfilerApi -stop-on-range-end=true -cudabacktrace=true -x true -o my_profile python main.py To create the profile I’m using Nsight System 2020.4.3.7 via the CLI. If i >= warmup_iters: _push("opt.step()") If i >= warmup_iters: _push("iteration".format(i)) If i = warmup_iters: ().cudaProfilerStart() # start profiling after 10 warmup iterations Optimizer = (model.parameters(), lr=1e-3) cudaProfilerStop().Ī complete code snippet can be seen here: import torchĭata = torch.randn(64, 3, 224, 224, device=device) These ranges work as a stack and can be nested.Īlso, we are usually not interested in the first iteration, which might add overhead to the overall training due to memory allocations, cudnn benchmarking etc., thus we start the profiling after a few iterations via ().cudaProfilerStart() and stop it at the end via. To annotate each part of the training we will use nvtx ranges via the _push/.range_pop operations.

This topic describes a common workflow to profile workloads on the GPU using Nsight Systems.Īs an example, let’s profile the forward, backward, and optimizer.step() methods using the resnet18 model from torchvision.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed